How RLHF and Reward Models Turn AI into a Business Advantage

AI can generate answers, but not all answers create value.

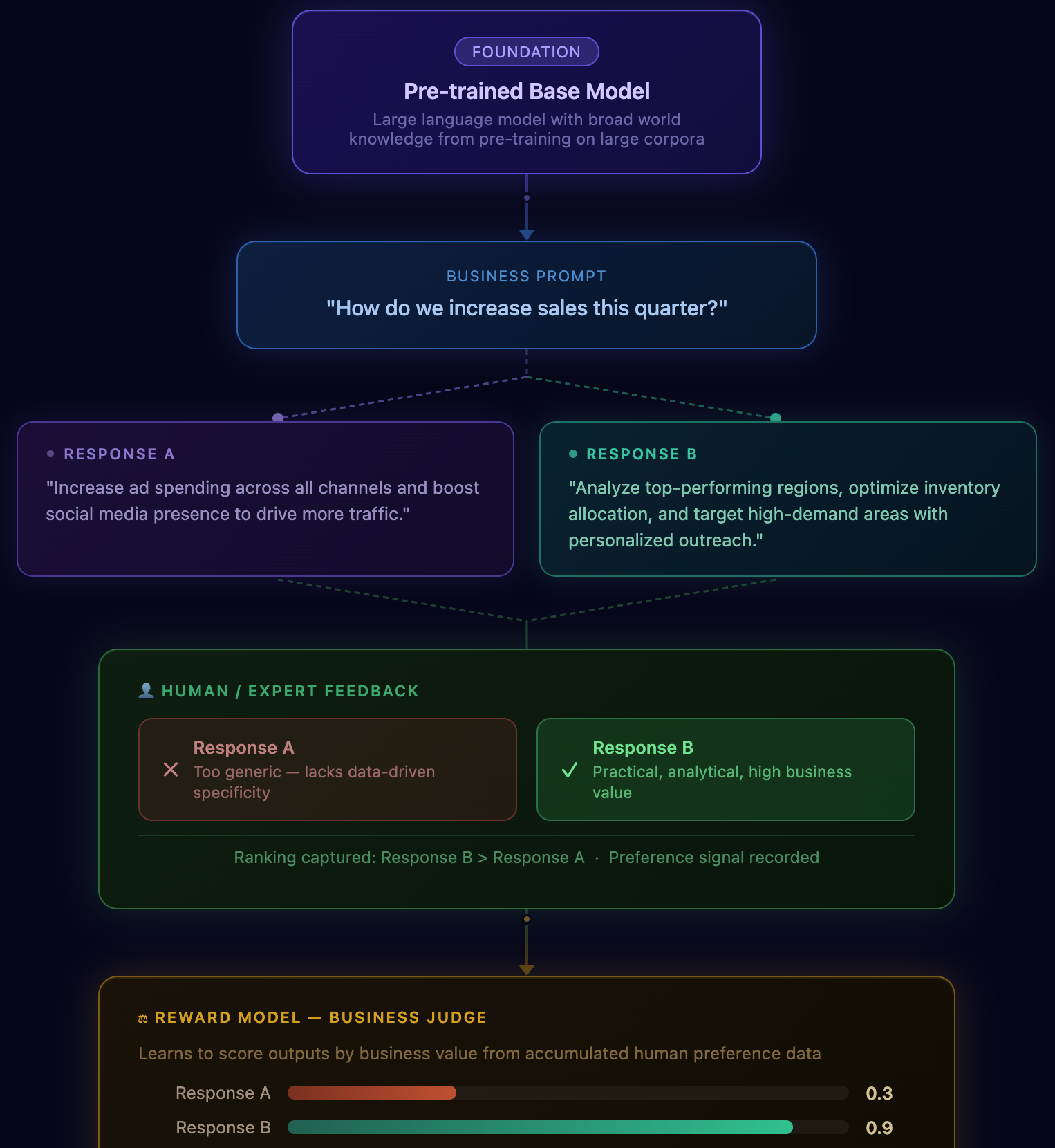

Reinforcement Learning from Human Feedback (RLHF) changes this by training AI using human judgment. Instead of asking what’s correct, it learns what’s better by comparing responses and reinforcing those that align with business goals.

At the core is a Reward Model, which scores outputs based on:

- Business impact

- Actionability

- Strategic relevance

Over time, this becomes a digital representation of your organization’s decision-making intelligence.

• AI that prioritizes high-value decisions

• Faster, more consistent execution

• Institutional knowledge embedded into systems

• Continuous improvement through feedback

RLHF transforms AI from a tool that generates responses into a system that consistently drives better business outcomes.

RLHF with Reward Models

Reinforcement Learning from Human Feedback

Pre-trained Base Model

Large language model with broad world knowledge from pre-training on large corpora

"How do we increase sales this quarter?"

"Increase ad spending across all channels and boost social media presence to drive more traffic."

"Analyze top-performing regions, optimize inventory allocation, and target high-demand areas with personalized outreach."

buy cialis how long does cialis stay in your system cialis vs tadalafil

Now setting up a small reminder to revisit the site on a slow day, and a stop at thefashionedit confirmed the reminder was a good idea, planning return visits is a small organisational act that signals trust in ongoing quality and this site has earned that planned return through consistent performance across the pieces I have read so far.

love Beyond Memories specializes in custom crystal keepsakes that celebrate romance and emotional connections. Elegant engravings transform treasured photos into timeless gifts perfect for weddings, anniversaries, birthdays, and heartfelt romantic surprises.

Команда профессионалов

лечение десен и полости рта;

доступные цены на стоматологические услуги https://socdental.ru/protezirovanie/zubov-nesemnoe/

Постоянные пациенты имеют скидки;

Picked this for my morning read because the topic seemed worth the time, and a look at frostharvestgoods confirmed the choice was right, my morning reading slot is precious and giving it to this site felt like a good investment rather than a waste which is a higher endorsement than I usually offer for content.

Really thankful for posts that respect a reader’s time, this one does, and a quick look at oakpetalemporium was the same, no need to scroll through endless intros just to get to the actual content, that approach alone is enough reason to come back here regularly for the kind of writing offered.

Thankyou for helping out, great information.